CompTIA DY0-001 Practice Test 2026

Updated On : 5-May-2026Prepare smarter and boost your chances of success with our CompTIA DY0-001 practice test 2026. These CompTIA DataAI Exam test questions helps you assess your knowledge, pinpoint strengths, and target areas for improvement. Surveys and user data from multiple platforms show that individuals who use DY0-001 practice exam are 40–50% more likely to pass on their first attempt.

Start practicing today and take the fast track to becoming CompTIA DY0-001 certified.

1890 already prepared

89 Questions

CompTIA DataAI Exam

4.8/5.0

| Page 1 out of 9 Pages |

CompTIA DataAI Exam Practice Questions

CompTIA DataAI DY0-001 Official Exam Blueprint Weight & Our Practice Questions

| CompTIA DataAI DY0-001 Domain | Official Exam Weight | Our Practice Questions |

|---|---|---|

| Mathematics and Statistics | 17% | 15 |

| Our Practice Questions Covers Subtopics: Probability, Descriptive statistics, Inferential statistics, Hypothesis testing, Regression analysis, Correlation, Distributions, Bayesian statistics, Linear algebra, Calculus concepts, Statistical modeling, Confidence intervals, Data normalization, Sampling techniques, Statistical significance | ||

| Modeling, Analysis, and Outcomes | 24% | 24 |

| Our Practice Questions Covers Subtopics: Predictive modeling, Data analysis, Feature engineering, Model evaluation, Outcome interpretation, Data visualization, Classification models, Regression models, Clustering, Dimensionality reduction, Business outcomes, KPI analysis, Analytical workflows, Data storytelling, Reporting and dashboards | ||

| Machine Learning | 24% | 19 |

| Our Practice Questions Covers Subtopics: Supervised learning, Unsupervised learning, Reinforcement learning, Neural networks, Deep learning, Natural language processing (NLP), Computer vision, Model training, Model tuning, Bias and variance, Overfitting and underfitting, Decision trees, Random forests, Support vector machines, AI model evaluation | ||

| Operations and Processes | 22% | 20 |

| Our Practice Questions Covers Subtopics: MLOps, Data pipelines, Data governance, Data preparation, Data engineering, Model deployment, CI/CD workflows, Cloud AI services, Data security, Automation, Workflow orchestration, Version control, Monitoring and logging, Ethical AI, Compliance and governance | ||

| Specialized Applications of Data Science | 13% | 11 |

| Our Practice Questions Covers Subtopics: Generative AI, Recommendation systems, Time-series forecasting, Fraud detection, AI ethics, Healthcare analytics, Financial modeling, Retail analytics, Robotics, Autonomous systems, Specialized AI applications, Industry-specific AI solutions | ||

Data analytics, governance, and visualization—this exam validates your data skills. This practice test covers DY0-001 objectives: data mining, data profiling, quality control, and reporting. You will work through questions on cleaning datasets, using visualization tools, applying statistical methods, and communicating insights to stakeholders. Each answer includes detailed explanations that reinforce best practices for real-world data projects. By simulating the actual exam experience, it builds your confidence and reveals knowledge gaps before test day. Whether you struggle with data governance or visualization techniques, this test helps you focus your preparation effectively.

Stories of Success

Data concepts are fundamental for analytics roles. Preptia DY0-001 practice questions covered data types, governance, and visualization in a practical way. The questions were clear and aligned with the exam objectives. Passed on my first try!

Andrew Mitchell, Data Analyst | Chicago, IL

Data analytics fundamentals became clearer with Preptia.com practice tests for Data+ (DY0-001). The test questions covered data mining, visualization, and governance topics in a very practical way.

Sofia Petrova | Bulgaria

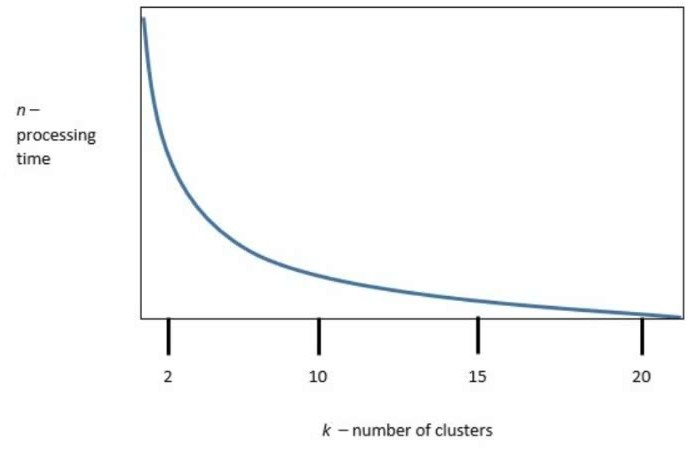

k is the number of clusters, and n is the processing time required to run the model. Which

of the following is the best value of k to optimize both accuracy and processing

requirements?

k is the number of clusters, and n is the processing time required to run the model. Which

of the following is the best value of k to optimize both accuracy and processing

requirements?