CompTIA CNX-001 Practice Test 2026

Updated On : 5-May-2026Prepare smarter and boost your chances of success with our CompTIA CNX-001 practice test 2026. These CompTIA CloudNetX Exam test questions helps you assess your knowledge, pinpoint strengths, and target areas for improvement. Surveys and user data from multiple platforms show that individuals who use CNX-001 practice exam are 40–50% more likely to pass on their first attempt.

Start practicing today and take the fast track to becoming CompTIA CNX-001 certified.

1840 already prepared

84 Questions

CompTIA CloudNetX Exam

4.8/5.0

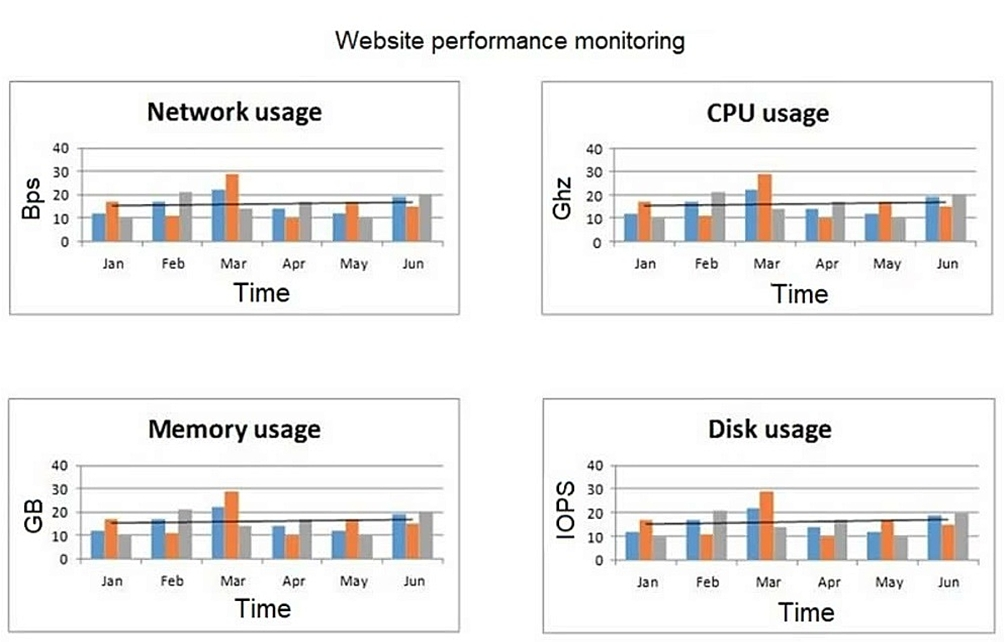

A network engineer at an e-commerce organization must improve the following dashboard

due to a performance issue on the website:

(Refer to the image: Website performance monitoring dashboard showing metrics like

network usage, CPU usage, memory usage, and disk usage over time.)

Which of the following is the most useful information to add to the dashboard for the

operations team?

A. 404 errors

B. Concurrent users

C. Number of orders

D. Number of active incidents

Explanation:

When diagnosing a general "performance issue" on a website, correlating system resource metrics (CPU, Memory, Network, Disk) with user load is the most critical piece of information for the operations team.

Root Cause Analysis:

The existing dashboard shows the effect (high resource usage). Concurrent users shows the most likely cause. A spike in concurrent users would logically explain a corresponding spike in resource utilization, leading to slow page loads and a poor user experience. Without this metric, the team cannot determine if high resource usage is expected (due to high traffic) or unexpected (due to an inefficient application or infrastructure problem).

Actionable Data:

This information directly guides the next steps for investigation:

If resource spikes correlate perfectly with user spikes, the solution may be to scale up infrastructure (e.g., add more web servers) to handle the load.

If resources are high while user count is low, it points to a severe application or system problem (e.g., a memory leak, inefficient code, or a misconfigured service) that needs immediate software-level investigation.

Why the other options are less useful for diagnosing performance:

A. 404 errors:

404 errors indicate "page not found" conditions. While important for monitoring site health and user experience, they are primarily related to content availability or configuration errors (e.g., broken links). A large number of 404s might consume some resources, but they are not a primary or direct cause of broad performance issues like slow page rendering or high CPU load across the entire site.

C. Number of orders:

This is a business metric, not an infrastructure performance metric. While a sales surge might cause a rise in traffic, the orders themselves are the result of user activity. The direct technical driver of performance is the number of users generating requests, not the number of transactions that complete successfully. It is less directly correlated to system load than concurrent users.

D. Number of active incidents:

This is an operational metric for tracking the response to problems. Displaying it on a performance dashboard adds no value for diagnosing the root cause of a new performance issue. It tells you that problems exist but provides no technical data to explain why.

In summary:

For an operations team tasked with solving a performance issue, the single most useful additional metric to correlate with system resources is Concurrent Users. It provides the essential context needed to distinguish between expected high load and anomalous system behavior.

A cafe uses a tablet-based point-of-sale system. Customers are complaining that their food is taking too long to arrive. During an investigation, the following is noticed: Every kitchen printer did not print the orders Payments are processing correctly The cloud-based system has record of the orders This issue occurred when the cafe was busy Which of the following is the best way to mitigate this issue?

A. Updating the application

B. Adding an access point exclusively for the kitchen

C. Upgrading the kitchen printers' wireless dongles

D. Assigning the kitchen printers static IP addresses

Explanation:

The symptoms point to a classic case of wireless network congestion or poor coverage, especially in the kitchen area. Let's analyze the clues:

The Problem: Kitchen printers did not print orders.

Key Evidence:

Payments are processing correctly and The cloud-based system has a record of the orders: This proves the central application, payment gateway, and internet connection are working. The orders successfully left the tablet and reached the cloud server.

The issue occurred when the cafe was busy:

This is the critical clue. During busy times, there are more devices (customer phones on guest Wi-Fi, more tablets in use, more staff devices) connected to the wireless network. This increased load can congest the Wi-Fi channel or overwhelm a single access point (AP), leading to dropped packets and failed connections for devices with weaker signals, like the printers in the kitchen.

The Root Cause:

The most likely scenario is that the kitchen, which might be at the edge of the Wi-Fi coverage, experiences significant packet loss when the network is under heavy load. The print commands from the cloud server or the tablets are not reliably reaching the printers.

Why B is the best mitigation:

Adding a dedicated access point in or near the kitchen creates a strong, reliable signal specifically for the mission-critical printers. This solves the coverage issue and offloads their traffic from the main, congested AP. It ensures that even during peak hours, the print commands have a clear and strong path to the printers.

Why the other options are less effective:

A. Updating the application:

Since payments and cloud recording work, the application itself is functioning correctly. An update is unlikely to fix a network infrastructure problem.

C. Upgrading the kitchen printers' wireless dongles:

While newer dongles might have better antennas, this is a minor fix that may not overcome the fundamental issue of network congestion and poor coverage. Adding a better AP is a more robust and scalable solution.

D. Assigning the kitchen printers static IP addresses:

Dynamic (DHCP) vs. static IP addressing has no bearing on wireless signal strength, network congestion, or the reliability of packet delivery. The printers are likely already getting IP addresses correctly (since they sometimes work), so this change would not mitigate the busy-hour failure.

Reference:

This is a fundamental principle of wireless network design for business-critical operations. Best practices, as outlined by vendors like Cisco Meraki or Ubiquiti, dictate placing APs to ensure strong coverage (-67 dBm or better) in all areas where reliable connectivity is required, especially for real-time devices like POS systems and printers. Isolating high-priority devices on a dedicated AP or SSID is a common strategy to mitigate interference and congestion.

A network architect is designing a solution to place network core equipment in a rack inside a data center. This equipment is crucial to the enterprise and must be as secure as possible to minimize the chance that anyone could connect directly to the network core. The current security setup is: In a locked building that requires sign in with a guard and identification check. In a locked data center accessible by a proximity badge and fingerprint scanner. In a locked cabinet that requires the security guard to call the Chief Information Security Officer (CISO) to get permission to provide the key. Which of the following additional measures should the architect recommend to make this equipment more secure?

A. Make all engineers with access to the data center sign a statement of work.

B. Set up a video surveillance system that has cameras focused on the cabinet.

C. Have the CISO accompany any network engineer that needs to do work in this cabinet.

D. Require anyone entering the data center for any reason to undergo a background check.

Explanation:

The goal is to minimize the chance that anyone could connect directly to the network core by enhancing physical security. The current setup already includes multiple layers of access control (guarded building, biometrics for the data center, and a locked cabinet with approval from the CISO). However, there is still a risk during authorized access or potential social engineering.

Why B is Correct:

Video surveillance focused on the cabinet adds a critical layer of security:

Deterrence:

The presence of cameras discourages malicious actions (e.g., unauthorized connections) even by authorized personnel.

Monitoring:

Continuous recording allows for auditing and review of all activities at the cabinet, ensuring accountability.

Incident Response:

If a security breach occurs, footage can be used to investigate and identify the perpetrator.

This measure directly addresses the risk of physical tampering with the core equipment.

Why the Other Options Are Incorrect:

A. Make all engineers sign a statement of work:

A statement of work (SOW) defines project scope and deliverables; it is not a security measure. It does not prevent physical access or tampering.

C. Have the CISO accompany any network engineer:

While this ensures supervision, it is impractical and not scalable. The CISO cannot be available at all times, and it does not provide continuous monitoring or deterrence when the CISO is not present.

D. Require background checks for anyone entering the data center:

Background checks are important for pre-screening personnel but are a preventive measure, not a detective or deterrent one. They do not prevent real-time tampering or provide monitoring after access is granted.

Reference:

Physical security best practices (e.g., NIST SP 800-53) recommend video surveillance as a complementary control to access systems for monitoring sensitive areas.

In high-security environments, video surveillance is standard for protecting critical infrastructure, providing both deterrence and forensic capabilities.

Server A (10.2.3.9) needs to access Server B (10.2.2.7) within the cloud environment since theyare segmented into different network sections. All external inbound traffic must be blocked to those servers. Which of the following need to be configured to appropriately secure the cloud network? (Choose two.)

A. Network security group rule: allow 10.2.3.9 to 10.2.2.7

B. Network security group rule: allow 10.2.0.0/16 to 0.0.0.0/0

C. Network security group rule: deny 0.0.0.0/0 to 10.2.0.0/16

D. Firewall rule: deny 10.2.0.0/16 to 0.0.0.0/0

E. Firewall rule: allow 10.2.0.0/16 to 0.0.0.0/0

F. Network security group rule: deny 10.2.0.0/16 to 0.0.0.0/0

C. Network security group rule: deny 0.0.0.0/0 to 10.2.0.0/16

Explanation:

The goal is to allow specific internal communication while explicitly blocking all external inbound traffic. This is a fundamental cloud security concept using security groups (stateful firewalls at the instance/interface level) with a default-deny and explicit-allow model.

Let's analyze the requirements:

Allow Server A (10.2.3.9) to access Server B (10.2.2.7): This requires an explicit allow rule.

Block all external inbound traffic to those servers: This is the default behavior of cloud security groups, but it's reinforced by an explicit deny rule for clarity and to ensure no other allow rules permit external access.

They are in different network sections (subnets): This means the traffic must traverse the cloud network, making Network Security Groups (or their cloud-equivalent, like AWS Security Groups or Azure NSGs) the primary tool for this task.

Why A and C are correct:

Option A (NSG rule:

allow 10.2.3.9 to 10.2.2.7): This is an explicit allow rule that meets the first requirement precisely. It permits the specific IP of Server A to access the specific IP of Server B.

Option C (NSG rule:

deny 0.0.0.0/0 to 10.2.0.0/16):This is an explicit deny rule that meets the second requirement. It blocks all traffic (0.0.0.0/0 meaning "anywhere") from reaching the entire VNet/VPC where the servers reside (10.2.0.0/16). In most cloud platforms, an explicit deny rule is not strictly necessary because the default security group behavior is to deny all inbound traffic. However, adding this rule provides an explicit layer of security, ensures the intent is clear, and prevents any accidental changes by overriding any less specific allow rules. It is a best practice for a "default deny" posture.

Why the Other Options Are Incorrect:

Option B (NSG rule:

allow 10.2.0.0/16 to 0.0.0.0/0): This is an outbound rule (it allows the source 10.2.0.0/16 to go to any destination 0.0.0.0/0). The question is focused on securing inbound access to the servers. This rule does not help and is overly permissive for outbound traffic, which is not required by the scenario.

Option D (Firewall rule:

deny 10.2.0.0/16 to 0.0.0.0/0): This rule is likely for a network-level firewall. It is misconfigured. It would block all traffic originating from the internal servers (10.2.0.0/16) trying to go out to the internet (0.0.0.0/0). This is the opposite of what we want. We want to block traffic coming from the internet to the servers.

Option E (Firewall rule:

allow 10.2.0.0/16 to 0.0.0.0/0): This is also an outbound rule. It would explicitly allow the servers to talk to the internet, which is not a requirement stated in the question. The requirement is only to block inbound traffic.

Option F (NSG rule:

deny 10.2.0.0/16 to 0.0.0.0/0): Similar to Option D, this syntax for an NSG would be interpreted as an outbound rule. It would block Server A and Server B from initiating any outbound connections, which would break the required communication between them. We need to control inbound traffic to the servers.

Key Takeaway:

The correct options use inbound rules on Network Security Groups (which are attached to the servers themselves) to explicitly allow the required internal traffic and deny all other external traffic. Cloud security groups are stateful, so the return traffic for the allowed connection (Server B responding to Server A) is automatically permitted.

A SaaS company is launching a new product based in a cloud environment. The new product will be provided as an API and should not be exposed to the internet. Which of the following should the company create to best meet this requirement?

A. A transit gateway that connects the API to the customer's VPC

B. Firewall rules allowing access to the API endpoint from the customer's VPC

C. A VPC peering connection from the API VPC to the customer's VPC

D. A private service endpoint exposing the API endpoint to the customer's VPC

Explanation:

The requirement is that the API-based product should not be exposed to the internet. This means the API must be accessible only through private network connections, not publicly.

Why D is Correct:

A private service endpoint (e.g., AWS PrivateLink, Azure Private Endpoint) is specifically designed for this scenario. It allows customers to access the service (API) privately within their own VPC (Virtual Private Cloud) without traversing the public internet. The endpoint is exposed directly to the customer's VPC via a secure, private connection, ensuring the API is never publicly accessible.

Why the Other Options Are Incorrect:

A. A transit gateway that connects the API to the customer's VPC:

A transit gateway is used to interconnect multiple VPCs and on-premises networks. While it can facilitate private connectivity, it is a network hub and not the most direct or secure method for exposing a private API to a specific customer. It may involve more configuration and cost.

B. Firewall rules allowing access to the API endpoint from the customer's VPC:

Firewall rules alone cannot make the API private; they only control access. If the API endpoint has a public IP, it is still exposed to the internet (even if blocked by rules). This does not meet the requirement of avoiding internet exposure.

C. A VPC peering connection from the API VPC to the customer's VPC:

VPC peering connects two VPCs privately. However, it requires both VPCs to be in the same cloud provider and region, and it involves managing IP address ranges to avoid overlaps. It is not scalable for a SaaS company with multiple customers, as each customer would require a separate peering connection, which is complex and limits scalability.

Reference:

Private service endpoints (like AWS PrivateLink) are the industry standard for providing secure, private access to SaaS APIs without internet exposure. This is recommended by cloud security best practices (e.g., AWS Well-Architected Framework) and is essential for compliance in regulated industries.

This approach ensures that the API is only accessible through private networks, enhancing security and performance for customers.

A network engineer is working on securing the environment in the screened subnet. Before penetration testing, the engineer would like to run a scan on the servers to identify the OS, application versions, and open ports. Which of the following commands should the engineer use to obtain the information?

A. tcpdump -ni eth0 src net 10.10.10.0/28

B. nmap -A 10.10.10.0/28

C. nc -v -n 10.10.10.x 1-1000

D. hping3 -1 10.10.10.x -rand-dest -I eth0

Explanation:

The requirement is to scan servers to identify the OS, application versions, and open ports. This is a direct description of the functionality provided by the Nmap security scanner.

Here’s why the nmap -A command is the correct choice:

nmap (Network Mapper): The industry-standard tool for network discovery and security auditing.

-A (Aggressive Scan): This is a single option that enables several critical features:

OS Detection: Attempts to determine the operating system of the target hosts.

Version Detection: Probes open ports to determine the application name and version (e.g., Apache httpd 2.4.52).

Script Scanning: Runs a default set of Nmap Scripting Engine (NSE) scripts for further discovery.

Traceroute: Performs a traceroute to the host.

10.10.10.0/28: This is the target, specifying the network range of the screened subnet (DMZ) to be scanned.

This single command efficiently fulfills all the requirements: OS detection, application versioning, and port discovery.

Why the Other Options Are Incorrect:

Option A (tcpdump -ni eth0 src net 10.10.10.0/28):

This is a packet capture tool. It listens on the network interface eth0 and captures packets coming from (src) the specified network. It does not actively scan for open ports or service versions; it only passively records traffic that happens to be flowing. It will not provide a list of open ports or OS versions.

Option C (nc -v -n 10.10.10.x 1-1000):

This uses netcat (the "Swiss army knife" of networking) to attempt a TCP connection to ports 1 through 1000 on a single host (x). The -v (verbose) flag might show if a connection was successful, indicating an open port. However, it is a very rudimentary port scanner. It does not perform OS detection, application version detection, or UDP scanning. It is also inefficient for scanning a range of hosts (/28), as the command is written for a single IP address.

Option D (hping3 -1 10.10.10.x -rand-dest -I eth0):

This is a packet crafting tool. This specific command:

-1: Sends ICMP ping packets.

-rand-dest: Sets random destination ports (meaningless for ICMP).

-I eth0: Uses the eth0 interface.

This command is essentially sending a flood of ICMP echo requests to a single host. It is primarily used for host discovery ("is this host up?") or network stress testing. It does not identify open TCP/UDP ports, OS versions, or application versions.

Reference:

The nmap tool and its flags are documented extensively in its official manual pages (man nmap) and on its website (nmap.org). The -A option is explicitly described as enabling "Aggressive mode" which includes OS and version detection, script scanning, and traceroute.

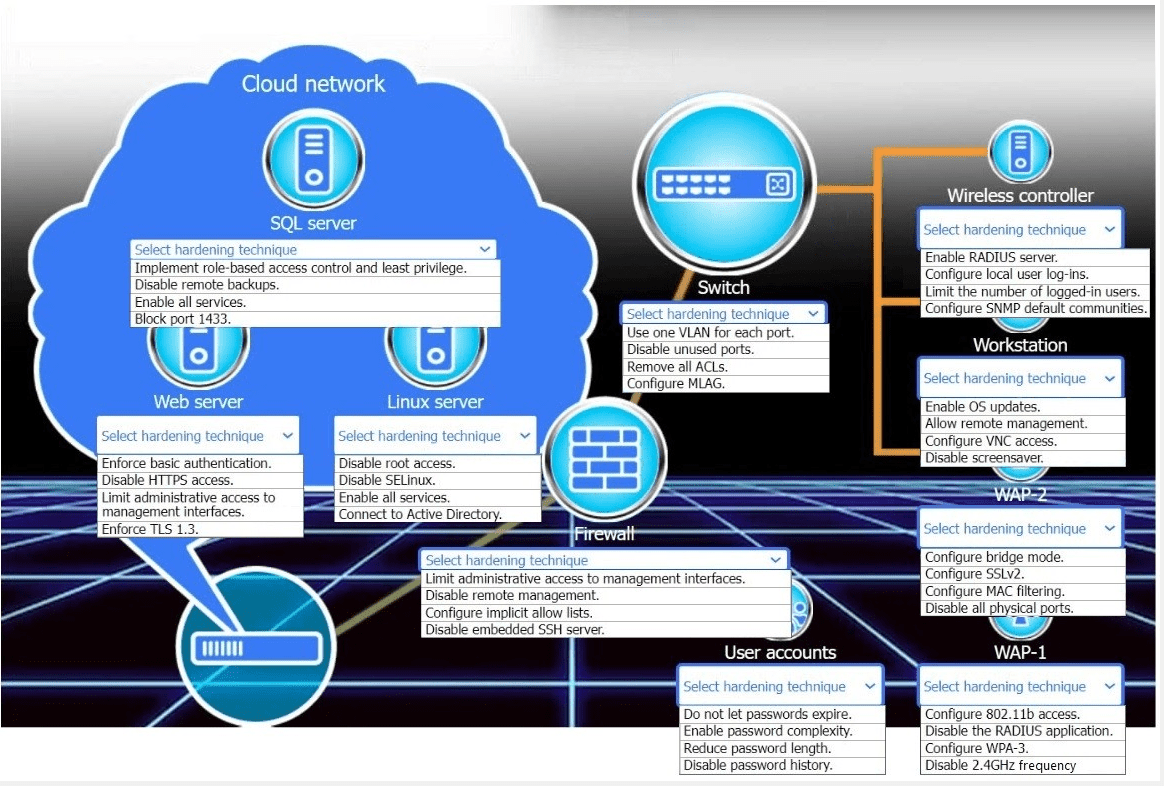

New devices were deployed on a network and need to be hardened.

INSTRUCTIONS

Use the drop-down menus to define the appliance-hardening techniques that provide the

mostsecure solution.

If at any time you would like to bring back the initial state of the simulation, please click the

Reset All button.?

After a company migrated all services to the cloud, the security auditor discovers many users have administrator roles on different services. The company needs a solution that: Protects the services on the cloud Limits access to administrative roles Creates a policy to approve requests for administrative roles on critical services within a limited time Forces password rotation for administrative roles Audits usage of administrative roles Which of the following is the best way to meet the company's requirements?

A. Privileged access management

B. Session-based token

C. Conditional access

D. Access control list

Explanation:

The scenario describes a classic case of excessive and unmanaged privileged access (users having administrator roles) in a cloud environment. The requirements are a direct match for the capabilities of a Privileged Access Management (PAM) solution.

A PAM solution is specifically designed to secure, manage, and monitor access to privileged accounts and credentials. Let's map each requirement to a PAM feature:

"Protects the services on the cloud" & "Limits access to administrative roles": PAM solutions enforce the principle of least privilege by allowing organizations to discover, vault, and manage privileged credentials. Users no longer have direct, standing administrative access.

"Creates a policy to approve requests for administrative roles... within a limited time": This is just-in-time (JIT) access. A core feature of PAM is that users must request elevated privileges, which triggers an approval workflow. Once approved, access is granted for a specific, limited timeframe and then automatically revoked.

"Forces password rotation for administrative roles": PAM solutions can automatically manage the passwords for administrative accounts. After a privileged session is over, the PAM system can automatically rotate the password, ensuring that the credential used is never static and is unknown to the user.

"Audits usage of administrative roles": PAM solutions provide comprehensive session monitoring and auditing. They record all activities performed during a privileged session (often with video-like playback), creating a detailed audit trail for compliance and forensic analysis.

Why the Other Options Are Incorrect:

Option B (Session-based token):

A session token (like those used in web authentication) is used to maintain a user's state and permissions during a single login session. While important for security, it does not address the management, approval, rotation, or auditing of privileged roles. It is a technical component, not a comprehensive management solution.

Option C (Conditional access):

Conditional Access (CA) is a feature in identity providers (like Azure AD) that grants access to applications based on specific conditions (e.g., user location, device compliance, risk level). While CA can help protect access (e.g., by requiring MFA for admin roles), it does not provide the just-in-time access, credential vaulting, password rotation, or session auditing that a dedicated PAM solution offers. CA controls if you can sign in, but not what you do with privileged credentials after.

Option D (Access control list):

An Access Control List (ACL) is a basic network or file system security mechanism that defines which users or systems are granted or denied access to a specific object. It is a simple permit/deny list based on attributes like IP address. ACLs are far too rudimentary and lack the workflow, temporal, auditing, and credential management capabilities required to solve this problem. They cannot enforce just-in-time access or password rotation.

Reference:

The described solution aligns with best practices from cybersecurity frameworks like NIST (Special Publication 1800-21: Privileged Account Management for the Financial Services Sector) and is a core requirement of major compliance standards. Cloud providers also offer native PAM tools (e.g., Azure AD Privileged Identity Management - PIM) or integrate with third-party solutions to meet these exact requirements.

A SaaS company's new service currently is being provided through four servers. The company's end users are having connection issues, which is affecting about 25% of the connections. Which of the following is most likely the root cause of this issue?

A. The service is using round-robin load balancing through a DNS server with one server down.

B. The service is using weighted load balancing with 40% of the traffic on server A, 20% on server B, 20% on server C, and server D is down.

C. The service is using a least-connection load-balancing method with one server down.

D. Load balancing is configured with a health check in front of these servers, and one of these servers is unavailable.

Explanation:

The issue affects about 25% of the connections, and there are four servers. This strongly suggests that one of the four servers is unavailable, and the load-balancing method is not effectively detecting or compensating for this failure.

Why A is Correct:

This scenario describes DNS round-robin load balancing. This is a simple method where the DNS server returns the list of server IP addresses in a rotating order.

The Problem:

DNS round-robin has no inherent health checking. If one server goes down, the DNS server continues to include its IP address in the rotation. Approximately every fourth user (25%) will receive the IP address of the failed server and will be unable to connect. This matches the symptom exactly.

Why the Other Options Are Incorrect:

B. The service is using weighted load balancing... with server D down:

In a properly configured weighted load balancing system (typically done by a dedicated load balancer, not DNS), the load balancer would use health checks to detect that server D is down and redistribute its assigned traffic (20% in this case) to the remaining healthy servers. Users might experience a slight slowdown due to increased load on the remaining servers, but not a complete connection failure for 25% of users. The failure rate would be 0% if health checks are working.

C. The service is using a least-connection load-balancing method with one server down:

Similar to option B, a least-connections load balancer uses health checks. If a server is down, it is removed from the pool. The load balancer would only direct traffic to the three healthy servers. This would not result in 25% of users failing to connect; it would result in 0% failure but potentially higher latency on the remaining servers.

D. Load balancing is configured with a health check in front of these servers, and one of these servers is unavailable:

This is the correct way to configure a load balancer. If a health check is configured and working, the load balancer would immediately detect the unavailable server and stop sending traffic to it. No users would be directed to the failed server, meaning the connection failure rate would be 0%, not 25%. Therefore, this cannot be the root cause.

Reference:

The limitations of DNS-based load balancing (lack of health checks) are a well-known network architecture consideration. This is contrasted with the capabilities of hardware or software load balancers (like F5, HAProxy, or cloud load balancers) that perform active health monitoring.

This is a common topic in discussions about high availability and fault tolerance for web services.

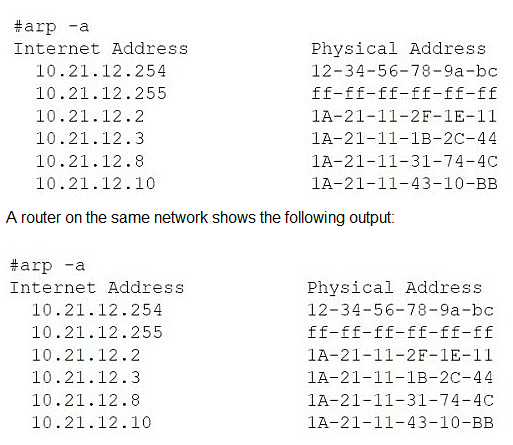

A network administrator is troubleshooting a user's workstation that is unable to connect to the company network. The results of ipconfig and arp -a are shown. The user’s workstation:

Has an IP address of 10.21.12.8

Has subnet mask 255.255.255.0

Default gateway is 10.21.12.254

ARP table shows 10.21.12.8 mapped to 1A-21-11-31-74-4C (a different MAC address than the local adapter)

A. Asynchronous routing

B. IP address conflict

C. DHCP server down

D. Broadcast storm

Explanation:

The evidence from the ipconfig /all and arp -a outputs strongly indicates an IP address conflict on the network.

Let's break down the key clues:

The Workstation's Identity:

The ipconfig /all command shows the workstation has:

IP Address: 10.21.12.8

Physical (MAC) Address: IA-21-11-33-44-5A

The ARP Table Anomaly:

The arp -a command displays the workstation's own ARP cache.

In this cache, the IP address 10.21.12.8 (its own IP) is not mapped to its own MAC address (IA-21-11-33-44-5A).

Instead, it is mapped to a different MAC address: 1A-21-11-31-74-4C.

This is the definitive symptom of an IP address conflict. Here’s why:

The ARP protocol resolves IP addresses to MAC addresses. When the workstation needs to communicate with itself (a common internal process) or when it receives traffic for its own IP, it looks in its ARP table.

The presence of its own IP address (10.21.12.8) mapped to a foreign MAC address (1A-21-11-31-74-4C) means another device on the same network segment is also using the IP address 10.21.12.8.

The workstation has likely received an ARP reply or broadcast from this other device claiming that IP address, causing its ARP cache to be updated incorrectly. This disrupts the workstation's ability to manage its own network identity and process incoming traffic correctly, leading to connectivity issues.

Why the Other Options Are Incorrect:

Option A (Asynchronous routing):

This occurs when the path out of a network is different from the path back in, often due to misconfigured routers. The problem here is isolated to a single IP address on a single subnet, and the ARP table provides no evidence of a routing issue. The gateway (10.21.12.254) is present and has a valid MAC in the ARP table.

Option C (DHCP server down):

If the DHCP server were down, the workstation would not have a valid IP address. It would likely have an APIPA address in the 169.254.x.x range. The output shows the workstation does have a valid IP address (10.21.12.8), subnet mask, and default gateway, proving DHCP (or static configuration) was functional.

Option D (Broadcast storm):

A broadcast storm is a network-level event caused by switching loops, where broadcast traffic (like ARP requests) floods the network, crippling all connectivity. The provided outputs show a stable and populated ARP table, which would not be possible during a storm. The problem is specific to one IP-MAC mapping, not a widespread network failure.

Conclusion:

The ARP table showing the workstation's own IP address mapped to another device's MAC address is a classic and clear sign of an IP address conflict. The administrator needs to find the device with the MAC address 1A-21-11-31-74-4C and change its IP address or configure the DHCP server to prevent it from handing out 10.21.12.8 again.

| Page 1 out of 9 Pages |

CompTIA CloudNetX Exam Practice Questions

CompTIA CloudNetX CNX-001 Official Exam Blueprints And Our Practice Questions

| CompTIA CloudNetX CNX-001 Domain | Official Exam Weight | Our Practice Questions |

|---|---|---|

| Network Architecture Design | 31% | 28 |

| Our Practice Questions Covers Subtopics: Hybrid cloud architecture, Enterprise network design, IPv4 and IPv6 addressing, Routing and switching, SD-WAN, SASE architecture, Network segmentation, Wireless networking, Connectivity solutions, Load balancing, High availability, Disaster recovery architecture, Cloud networking, DNS and DHCP, BGP and OSPF, Virtual networking, Data center networking | ||

| Network Security | 28% | 29 |

| Our Practice Questions Covers Subtopics: Zero Trust architecture, Identity and access management (IAM), Microsegmentation, Firewalls, VPN technologies, Network access control, Authentication protocols, Encryption, Secure protocols, Threat mitigation, Security policies, Security frameworks, Cloud security, Risk management, Compliance requirements, Security monitoring, Secure connectivity | ||

| Network Operations, Monitoring, and Performance | 16% | 15 |

| Our Practice Questions Covers Subtopics: Network monitoring, Performance optimization, Network maintenance, Automation and scripting, Observability, SIEM integration, Log analysis, Capacity planning, Traffic analysis, SLA monitoring, Network analytics, SNMP, Telemetry, Operational management, Cloud monitoring tools | ||

| Network Troubleshooting | 25% | 12 |

| Our Practice Questions Covers Subtopics: Troubleshooting methodology, Connectivity issues, Routing problems, Wireless troubleshooting, DNS issues, Latency analysis, Packet captures, Network diagnostics, Performance bottlenecks, Hardware failures, Cloud connectivity issues, Configuration troubleshooting, Monitoring alerts, Root cause analysis | ||

Cloud networking and hybrid infrastructure expertise is rare—and this exam proves it. This practice test targets CNX-001 objectives: network architecture, security, automation, and troubleshooting across multi-cloud environments. You will face questions on SD-WAN, zero-trust security, cloud connectivity, and network monitoring tools. Each scenario tests your ability to design and troubleshoot complex cloud networks. The detailed explanations clarify why certain solutions work better in specific architectures. By revealing gaps in your cloud networking knowledge, this test helps you focus study time where it matters most—preparing you for this advanced certification challenge.

Stories of Success

Cloud networking is a specialized skill, and this beta exam required focused preparation. Preptia CNX-001 practice test helped me master SDN, cloud connectivity, and network virtualization. I felt ready for every question type and passed easily. Great for anyone pursuing cloud networking!

Rachel Stevens, Cloud Network Engineer | San Francisco, CA

Advanced cloud networking topics were easier to understand using Preptia.com practice materials for CloudNetX (CNX-001). The scenario-based questions helped reinforce hybrid cloud connectivity concepts.

Oliver Scott | Australia